TinyML and Machine Learning on Edge Devices

The rapid proliferation of Internet of Things (IoT) devices and connected systems has transformed the way we interact with technology. Traditional cloud-based machine learning models often face challenges in latency, bandwidth, and energy efficiency when processing data from these devices. TinyML, or tiny machine learning, addresses these issues by enabling AI to run directly on small, low-power edge devices.

TinyML allows for the deployment of machine learning models on microcontrollers and other constrained hardware. This approach reduces dependency on cloud computing, allowing real-time data processing and immediate decision-making. Applications range from wearable health devices and smart home sensors to industrial monitoring and autonomous systems.

This blog explores the concept of TinyML, its advantages, real-world applications, technical considerations, challenges, and future trends. By understanding TinyML, businesses and developers can harness edge computing to create more responsive, efficient, and intelligent devices.

Understanding TinyML

What Is TinyML

TinyML refers to the deployment of machine learning algorithms on resource-constrained devices such as microcontrollers and low-power sensors. Unlike traditional ML models that rely on cloud processing, TinyML models run locally, enabling real-time predictions and actions without constant internet connectivity.

These models are optimized for minimal memory usage, low power consumption, and fast execution. TinyML represents a paradigm shift in AI, bringing intelligence to devices that previously lacked the capability to perform advanced computation.

Key Components of TinyML

The core components of TinyML include the machine learning model, embedded hardware, and data acquisition sensors. Models are typically lightweight and quantized to fit limited memory and processing capabilities. Embedded hardware, such as ARM Cortex-M microcontrollers, provides the platform for computation, while sensors capture real-time data for analysis.

This combination allows devices to operate independently while maintaining accuracy and efficiency in decision-making.

Evolution of Edge AI

The concept of edge computing has evolved alongside IoT growth. Initially, devices sent data to centralized servers for processing, resulting in latency and bandwidth challenges. TinyML extends edge computing by embedding intelligence directly on the device, enabling immediate responses and reducing reliance on cloud infrastructure.

Advantages of TinyML

Real-Time Data Processing

One of the main advantages of TinyML is its ability to process data in real-time. This is critical for applications such as wearable health monitors, predictive maintenance sensors, and autonomous robots, where delays can impact performance or safety.

Processing locally reduces latency and allows for faster decision-making compared to cloud-based systems that rely on remote servers.

Low Power and Cost Efficiency

TinyML models are designed for low-power operation, making them suitable for battery-operated devices. This efficiency extends device life, reduces energy costs, and enables deployment in remote or resource-limited environments.

Additionally, the reduced reliance on cloud infrastructure lowers operational costs while maintaining AI capabilities at the edge.

Enhanced Privacy and Security

Running AI models locally on edge devices improves privacy and security. Sensitive data does not need to be transmitted to the cloud, minimizing exposure to potential breaches. For example, TinyML-powered health devices can analyze user data on-device without sharing personal information externally.

This local processing aligns with increasing privacy regulations and growing concerns around data security.

Applications of TinyML

Smart Home and Wearable Devices

TinyML enables smart home devices to perform tasks such as voice recognition, motion detection, and environmental monitoring locally. Wearables like fitness trackers can analyze biometric data in real-time, providing immediate feedback to users.

By performing AI computations on-device, these systems become more responsive, reliable, and less dependent on cloud connectivity.

Industrial IoT and Predictive Maintenance

In industrial environments, TinyML is used for predictive maintenance and anomaly detection. Sensors embedded in machinery can detect irregular vibrations, temperature fluctuations, or other signs of wear and tear. By processing this data locally, companies can prevent equipment failure, reduce downtime, and optimize operational efficiency.

Autonomous Systems and Robotics

Autonomous drones, robots, and vehicles leverage TinyML to perform on-device navigation, obstacle detection, and decision-making. Real-time processing ensures quick responses to dynamic environments, enhancing safety and performance without relying on high-bandwidth cloud connections.

Technical Considerations for TinyML

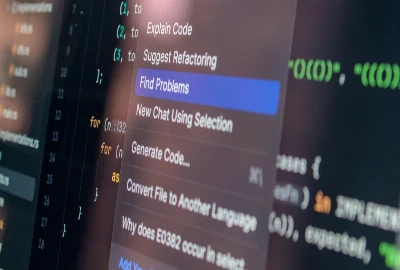

Model Optimization and Quantization

To fit on resource-constrained devices, TinyML models must be optimized through techniques such as pruning, quantization, and compression. These approaches reduce memory usage and computation requirements while maintaining model accuracy.

Quantization, for example, converts floating-point model weights to lower-bit representations, significantly reducing the footprint without major performance loss.

Embedded Hardware and Sensors

Selecting appropriate hardware is critical for TinyML success. Microcontrollers with sufficient processing power, memory, and energy efficiency are essential. Additionally, sensors must provide accurate and reliable data for the models to function correctly.

Designers must balance performance, cost, and power consumption to achieve optimal results.

Data Management and Preprocessing

Even on small devices, data preprocessing is necessary to normalize inputs, remove noise, and extract meaningful features. Efficient preprocessing ensures that TinyML models operate effectively and maintain high accuracy under limited computational resources.